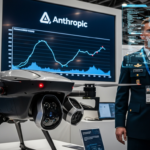

Tech executives are increasingly banning OpenClaw, a popular autonomous AI tool, from company devices due to significant security risks, despite its growing popularity and potential benefits.

What is OpenClaw?

OpenClaw (previously known as MoltBot and Clawdbot) is an open-source AI tool that can take control of a user’s computer to perform tasks like organizing files, conducting web research, and shopping online. Created by Peter Steinberger last November, its popularity surged when other developers contributed features. OpenAI recently acquired Steinberger and plans to keep OpenClaw open source.

Security Concerns Driving Corporate Bans

Several tech companies have implemented strict bans on OpenClaw, including:

- Massive, a web proxy company, warned employees to keep OpenClaw off company hardware

- A Meta executive threatened termination for employees using OpenClaw on work laptops

- Valere, a software company, implemented an immediate ban when an employee mentioned the tool

The primary concerns include:

- Unpredictable behavior that could compromise sensitive information

- Potential access to cloud services and client data

- Ability to clean up its actions, making detection difficult

- Vulnerability to being tricked through malicious instructions

Different Approaches to Mitigation

Companies are adopting various strategies to manage OpenClaw risks:

- Complete bans on corporate devices

- Isolated testing environments disconnected from company systems

- Dedicated research teams investigating security improvements

- Reliance on existing cybersecurity measures to block unauthorized software

Durbink CTO Jan-Joost den Brinker purchased a dedicated machine not connected to company systems where employees can experiment with OpenClaw safely.

Balancing Innovation with Security

Despite security concerns, companies recognize OpenClaw’s potential value. Valere has given its research team 60 days to develop adequate safeguards, with CEO Guy Pistone noting, “Whoever figures out how to make it secure for businesses is definitely going to have a winner.”

Similarly, Massive has begun cautiously exploring OpenClaw’s commercial possibilities by testing it on isolated cloud machines and releasing ClawPod, which allows OpenClaw agents to use Massive’s services to browse the web.

The Path Forward

The situation highlights the tension between adopting innovative AI technologies and maintaining robust security practices. As one executive put it, their policy is to “mitigate first, investigate second” when encountering potentially harmful technologies.

For now, most organizations are proceeding with extreme caution while acknowledging that OpenClaw might represent “a glimpse into the future” of AI tools.

GIPHY App Key not set. Please check settings