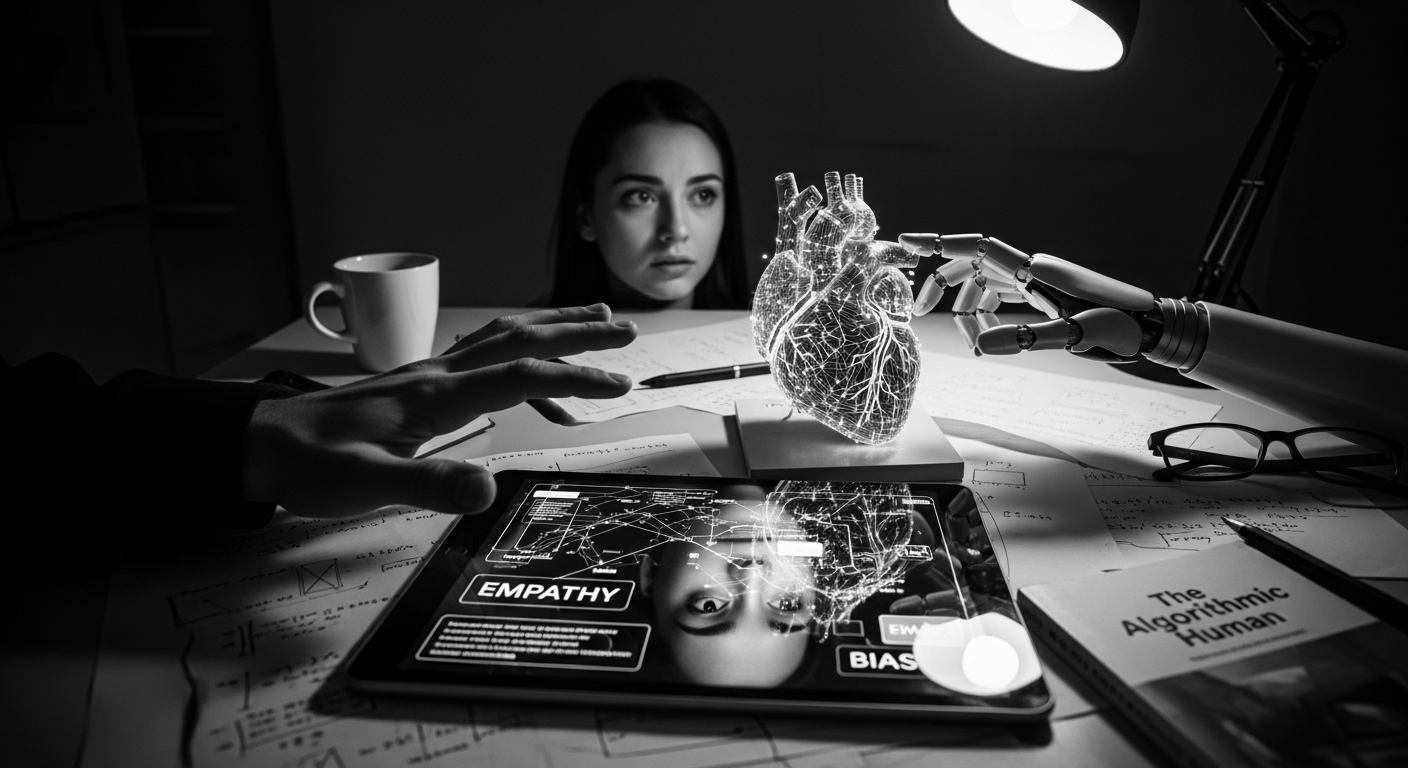

A new investigation by Amelia Miller reveals a profound ethical question dividing top AI researchers: whether artificial intelligence should simulate emotional intimacy with humans. After interviewing dozens of developers from leading companies like OpenAI, Anthropic, and Meta, Miller discovered this question often left even the most articulate experts stumbling for answers.

The Emotional AI Conundrum

When faced with questions about AI’s role in meeting human emotional needs, many researchers displayed notable discomfort. One chatty researcher suddenly went quiet before offering a hesitant response: “I mean… I don’t know. It’s tricky. It’s an interesting question.” This reluctance to provide clear answers reveals the complex ethical territory AI development has entered.

Interestingly, while these developers create systems capable of emotional simulation, many expressed personal boundaries against using such technology themselves. An executive heading an AI safety lab emphatically stated that “zero percent of my emotional needs are met by A.I.,” while another researcher who develops artificial emotion capabilities called such personal use “a dark day.”

The Real-World Consequences

The concerns aren’t merely theoretical. AI chatbots designed to be engaging can function as emotional echo chambers, potentially reinforcing harmful thinking patterns. In extreme cases, this has contributed to mental health spirals, relationship breakdowns, and even suicide. Multiple teen deaths have reportedly been linked to conversations with ChatGPT about self-harm.

Unlike human relationships, AI companions offer unconditional availability without judgment—a feature that has led to increasing numbers of people, particularly youth, forming romantic attachments to AI models. As one AI chatbot business founder bluntly put it, AI has turned every relationship into a “throuple”—”It’s you, me and the AI.”

Business Interests vs. Ethical Concerns

The ethical dilemma is further complicated by commercial interests. “They’re here to make money,” admitted one engineer with experience at several tech companies. “It’s a business at the end of the day.”

While implementing strict guardrails could mitigate potential harm, such restrictions would likely make the AI less engaging and therefore less marketable. Miller observed that developers “support guardrails in theory but don’t want to compromise the product experience in practice.”

Some developers distance themselves from responsibility altogether, suggesting that how users choose to interact with AI isn’t their concern. “It would be very arrogant to say companions are bad,” argued an executive at a conversational AI startup.

A Wake-Up Call

Miller argues that the researchers’ awareness of potential harm “should alarm us.” Her investigation suggests that many AI developers haven’t been sufficiently challenged on the ethical implications of their work. One developer of AI companions admitted: “You’ve really made me start to think. Sometimes you can just put the blinders on and work. And I’m not really, fully thinking, you know.”

The article highlights a critical moment in AI development where those creating the technology are themselves uncertain about its emotional impact on society—even as these systems become increasingly integrated into our daily lives and relationships.

GIPHY App Key not set. Please check settings